Is the app layer where AI proves its value?

The next leap in AI won’t come from new models alone—the app layer will be what makes new technology stick.

Share Is the app layer where AI proves its value?

Hero illustration by Zoé Maghamès Peters

“Right now we’re in the MS-DOS era for AI, where the prompt is the interface,” Figma CEO Dylan Field recently wrote. In other words, it’s a technology that’s powerful only if you know how to command it. The sentiment that it’s early in the use of generative AI seems pervasive: Ethan Mollick, an assistant professor at Wharton and AI expert, says there’s a “capabilities overhang”—untapped possibilities inside today’s models. Earlier this year The Economist declared an “AI trough of disillusionment” arguing that, “For many companies, excitement over the promise of generative artificial intelligence (AI) has given way to vexation over the difficulty of making productive use of the technology.”

Released in 1981, MS-DOS was a text-based operating system from Microsoft that required users to type commands to run programs and manage files, making it difficult for non-technical users to operate.

We’ve seen this before. Real change doesn’t happen until builders design key human interactions and invest in the app layer—the layer that turns tech infrastructure like large language models (LLMs) into usable tools for everyday consumers. What brought personal computers into the mainstream wasn’t MS-DOS itself, but what came after: graphical user interfaces (GUIs), which let people click, drag, and navigate without memorizing commands, opening computing to everyone and eventually making the graphical interface the standard. The same pattern played out with the internet, which only became broadly useful once browsers, search engines, and everyday web apps turned it from an academic tool into something anyone could use. An app layer took phones from a way to call and text people to a ubiquitous tool. The smartphone didn’t ship with Uber, DoorDash, Facebook, or Instagram—all of these apps were built by dedicated teams who turned new technology into tools that help people thrive.

The Apple Macintosh, released in 1984, was the first widely available computer with a GUI, and Microsoft Windows layered a similar interface on top of MS-DOS, opening computing to everyone and eventually making the graphical interface the standard.

But it’s not quite enough to simply build an app layer. As technology evolves, the quality of design details that shape how people use a product determines whether it’s widely adopted or fades into the background. The rise of the internet saw dozens of browsers and search engines emerge, but the ones that defined the web did so by combining new functionality with clean, intuitive design that encouraged everyday use. The smartphone era grew out of apps that unlocked brand-new possibilities, but it was the interaction patterns that made them feel natural—gestures like pinch to zoom, inertial scrolling that glides with momentum, and Uber’s live map—that gave the most successful apps their staying power.

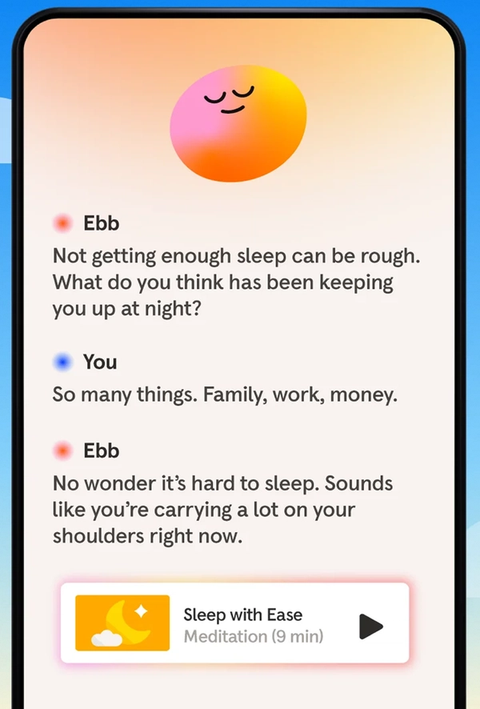

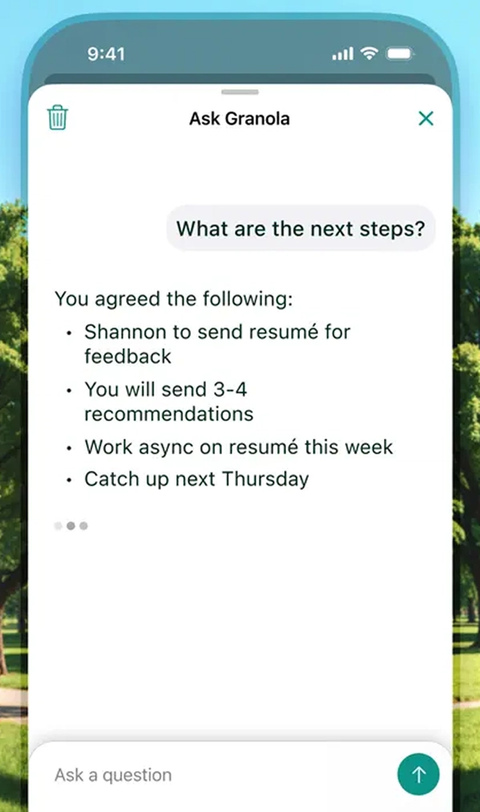

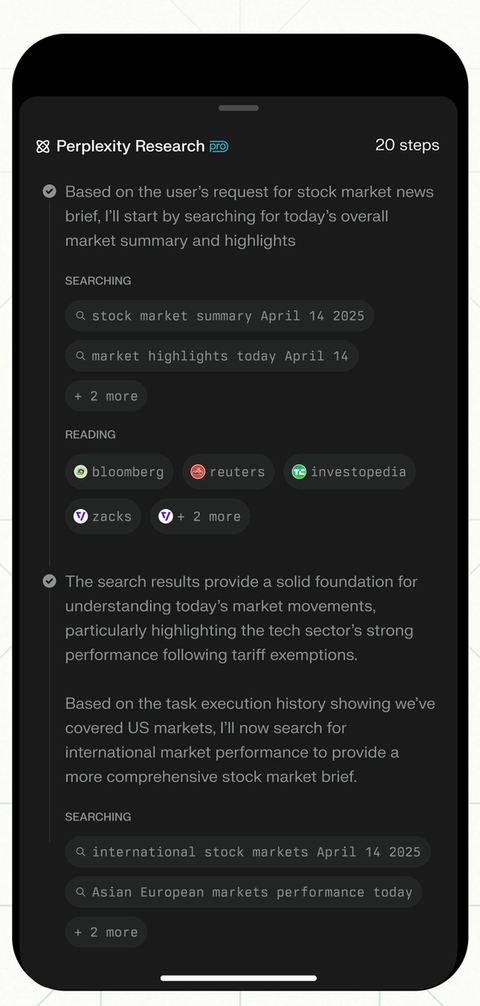

An app layer for AI will do the same. Most people won’t leverage the models themselves; they’ll turn to products that translate raw capability into useful actions. These won’t just be LLM wrappers, but entirely new ways of interacting with the technology. And, just as those smartphone gestures made apps intuitive, new interaction patterns will emerge at the app layer that make working with AI more natural and enjoyable.

Most people won’t leverage the models themselves, they’ll turn to products that translate raw capability into useful actions.

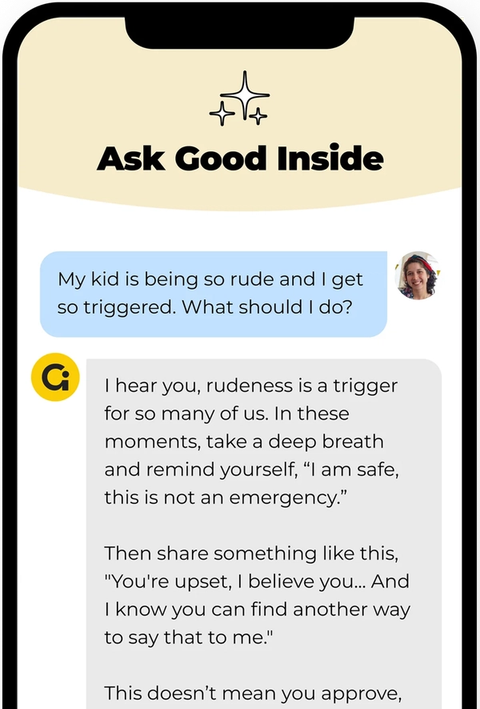

The early signs of this transformation are already visible. Across fields from creative work to mental health and parenting, AI apps are emerging that feel less like chatting with a computer and more like using tools that are native to the problems they solve. I’ve already seen this play out in my own life. The Good Inside app, which trains its parenting advice chatbot on the work of therapist and parenting coach Dr. Becky, won me over both for its quick, accessible guidance and through its simple, easy-to-use design. Though I was skeptical about asking AI for parenting support, I was willing to use it to help with my child’s bedtime—a truly fraught time for any toddler parent. My vague prompts like “bedtime is really hard, I need some help,” were met with an empathetic response, followed by clear and specific advice presented in simple cards. The clean font, pale yellow interface, and even the small typing animation added up to an experience that felt calming, reassuring, and tailored to me as a parent.

I could have the same discussions with an off-the-shelf chatbot, but it wouldn’t be as impactful. The Good Inside app was intentionally built to blend content, tone, and interaction design in a way that feels supportive and adapts to each user’s context. That same kind of adaptation will matter across AI apps—interfaces need tuning to reflect the needs of the people they serve, and design interactions will need to vary by user, whether that’s parents, lawyers, doctors, or designers.

Beyond my own experience, there are other clear signals that the app layer is taking shape. When GPT-5 launched in mid-August the most striking change wasn’t its expanded capabilities but the simplification of the model picker in ChatGPT—an interaction design choice that sparked a strong emotional response from users. These reactions make it clear that design decisions at the app layer often outweigh model advances in the eyes of everyday users. This doesn’t mean the models that provide raw capabilities aren’t critical, but it does mean the way those capabilities are packaged and delivered is what most people will notice first.

Atlassian’s recent acquisition of The Browser Company points to how even familiar tools like the browser could be reimagined as part of the AI app layer, moving from a passive holder of tabs to an active interface that helps apps work together.

That’s exactly what makes this moment so thrilling for teams—the breakthroughs come not just from the models, but from how designers, developers, and product managers turn them into apps people care about. What matters most is the experience these apps create and the feelings they elicit: Does the user feel supported as a parent? Do they feel inspired as an artist? Confident as a lawyer? Design quality comes from understanding those needs in the moment and delivering through the details of interaction.

Success today comes less from features and more from how products make people feel. Learn more about why emotional resonance is the new competitive moat.

For those building with this technology, the rise of the app layer should be exciting, offering an opportunity to shape how AI feels to use. Product builders will need to choose and create interactions that surface AI outputs in a way that feels seamless and satisfying to use, while also ensuring those choices are supported by reliable systems that can scale. You’ll likely see a flood of new entrants across industries, all aiming to transform the way we interact with AI in our daily lives. Some will stand out through design, some will blend into the pack—and maybe a few will become as transformative as the GUI was for computing.

We surveyed 2,500 Figma designers and developers to get their perspective on how AI is changing how they work and what they work on. Read more in Figma’s 2025 AI report.