Humanoid robots are here, and they’re asking us to come face to face with the promises and pitfalls of AI.

Share Are we finally entering the age of androids?

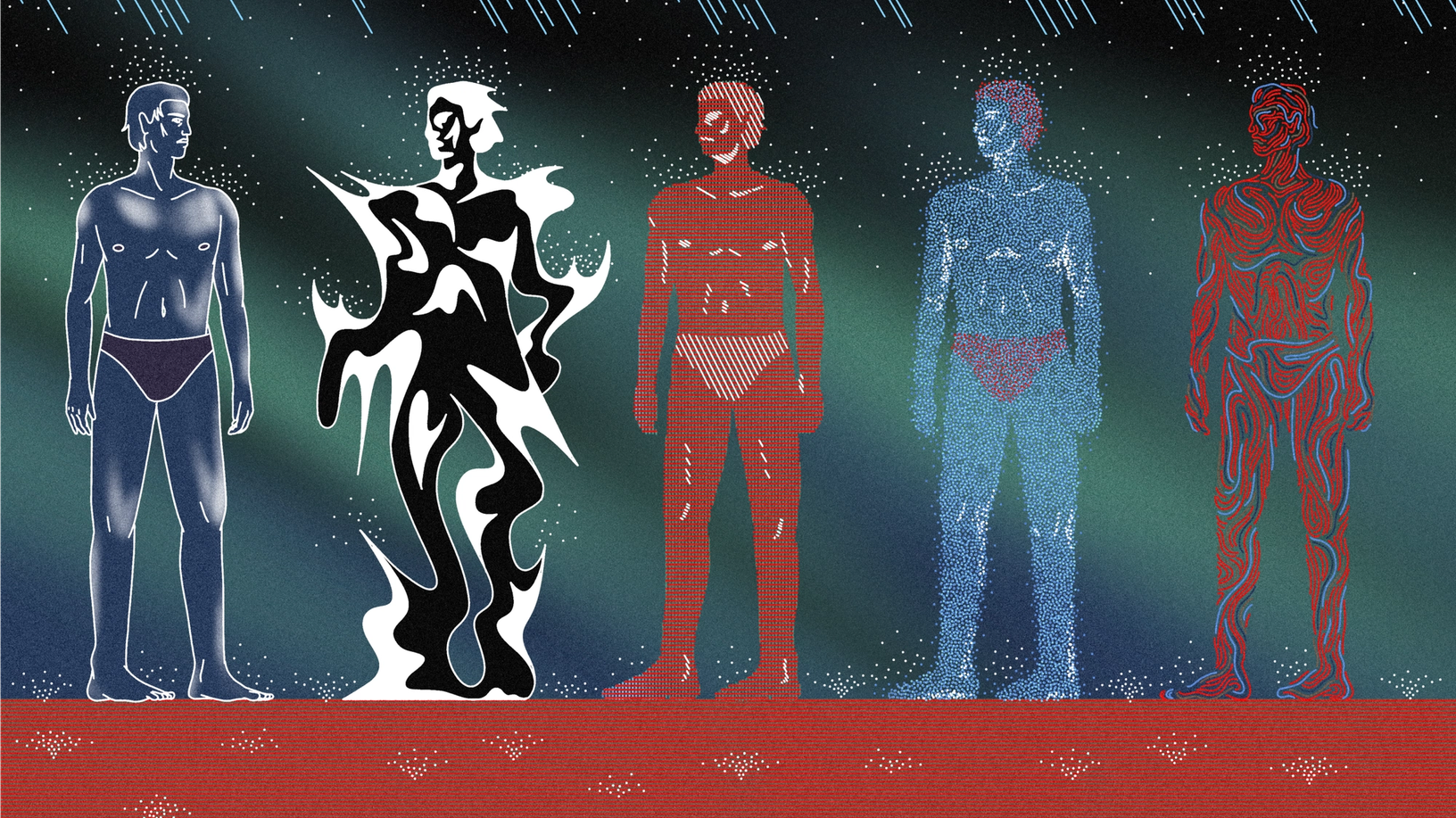

Illustrations by Thomas Merceron

At the Sphere, Ameca is known as Aura.

You don’t go to the Sphere, the 366-foot-tall entertainment venue in Las Vegas, for the humanoid robots. You can’t avoid them, either. This I find out in the orb-shaped arena’s “interactive atrium,” where five silver androids entertain the crowd. Designed by Engineered Arts, Ameca leverages AI and robotics to create an interactive experience that’s surprisingly compelling. It swivels in your direction when you speak. Its gray face performs intricate expressions, seeming to ponder your question. It even cracks jokes. On the night I visited, Ameca held the audience in a thrall as people jostled for a chance to be seen by the machine.

The ancient Greek poet Hesiod describes Hephaestus, the god of invention, building a giant bronze man to protect the island of Crete at the behest of Zeus. In the Taoist text Liezi, a mechanical engineer presents King Mu of Zhou with an artificial man.

Our fascination with humanoid robots dates as far back as the 4th century BC, when human-shaped automata appeared in Greek mythology and Chinese Taoist philosophy, and hasn’t abated—at least not in pop culture. From the replicants of Blade Runner to the harried droids of Star Wars, androids have cemented their place in our most enduring stories. And while science fiction has far outpaced reality, recent breakthroughs in AI have shrunk the gap. Today, there are persona-driven edutainment bots like Ameca, but most function more like Apollo, a general purpose laborer designed by Apptronik and argodesign. In contrast to Ameca’s lifelike face, Apollo has a flat, oval surface with cameras instead of eyes and an LED display where the mouth would be.

There are, of course, ramifications with putting a human face on AI—no matter how realistic. On the one hand, humanoid robots are a quaint throwback to pre-internet fantasies of scientific progress; on the other, they are our aspirations and anxieties about technology incarnate. By making software in our own likeness, we can engage it on our terms. “Ameca is an interface to technology that’s much more intuitive than a screen,” says Will Jackson, Founder and CEO of Engineered Arts. “It gives you the freedom to use gestures and expressions in a heads-up kind of experience, bringing tech back into our space rather than vice versa. I see it as the opposite of a VR headset.” To find out what tech teaches us when it assimilates in human form, I sat down with the people bringing these bots to life.

What the illusion of sentience unlocks

Humans have an innate tendency toward animism, anthropomorphism, and pareidolia; we see faces everywhere. And one thing’s for sure: You don’t have to try very hard to trick us. “We’re looking for animacy in everything around us,” says Madeline Gannon, multidisciplinary designer and founder of research studio Atonaton. Describing herself as part engineer, part Jane Goodall, Madeline recasts industrial robots as animalistic characters: She turns one into a googly-eye Cyclops, and others into an inquisitive flock, inspired by a bevy of swans she encountered in Zürich. “Body language is a common way of broadcasting and receiving information,” she continues. “We see this most prevalently in our relationship to animals. If you see something in the distance, you check if its haunches are tightened. You’re looking to see if you can eat it, or if it can eat you.”

Since the days of the AOL-era chatbot SmarterChild, we’ve been engaging even text-based interfaces as though they were sentient; the urge to personify gets exponentially stronger when the intelligence is embodied and in motion—not to mention in human form, and in the naturalistic way that LLMs enable. Will cites the 1944 Heider and Simmel experiment, in which researchers showed subjects an animation involving simple geometric shapes. Inevitably, the subjects assigned characteristics and motives to what, on screen, was a triangle moving from point A to point B. It’s no wonder that Ameca, who furrows its brow, smirks, and widens its eyes in “surprise,” makes a convincing show of intellect.

This article is part of The Prompt, an online and print magazine by Figma and designed by Chloe Scheffe.

“It’s quite a powerful illusion, and people will project onto a humanoid robot in ways that are not always useful,” says Will, whose aim is not to convince, but to captivate. “We very intentionally made Ameca look like a robot. We didn’t want to give it flesh-like skin or a defined race or gender.” In our Zoom conversation, Will sits next to a desktop version of Ameca so that its metal, armless torso is visible. The artificiality is clear, and so is the theatricality. Even in listening mode, Ameca cycles through a set of animated expressions, and later, Will demonstrates its celebrity impressions—Morgan Freeman, Spongebob Squarepants, Scarlett Johansson. There’s even a dedicated Persona Architect who creates custom personalities for clients, and when allowed, Ameca can code switch between different modes, just as we do. “Why shouldn’t an android be aware of what sort of situation it’s in?” says Leo Chen, Director of U.S. Operations at Engineered Arts, in a separate conversation.

Under the hood, things may be a bit impersonal—AI analyzing language to assign the right facial expressions to strings of text—but to Ameca’s human interlocutors, the experience can be extraordinary. “Some of my favorite moments are the moments of joy that we create,” says Leo, who recounts a trip with Ameca to the design conference Autodesk University. There, a shy Japanese attendee presented a question about video games using Google Translate on their phone. “Ameca is fluent in Japanese,” says Leo. “And you could see this person come out of their shell and just start glowing because of this conversation with a robot. Our job with Ameca is to create compelling moments people will remember for the rest of their lives.”

This is also what drives Madeline, though she’s less interested in creating flashbulb moments than inflection points in the human-computer story. “The material that needs to be artfully, thoughtfully, and ethically worked with is the illusion of sentience,” she says. She brings up Don Norman’s The Design of Everyday Things, in which the author describes how a handle renders a teapot legible. “There needs to be legibility in how to act with these complex systems. Designers have real value to contribute in bridging the language gap between people who develop technology and people who are affected by it,” she says. It’s toward that end that she repurposes familiar, off-the-shelf hardware in her work: “I try to find the wormhole to the alternate timeline where these amazing tools were used for curiosity, kindness, and care. Just by changing the value set, we can have a relationship with these machines based on connection instead of control.”

Just by changing the value set, we can have a relationship with these machines based on connection instead of control.

How to design an android for work

The general purpose robot Apollo, on the other hand, makes no claim to sentience. Contracted to help build Mercedes-Benz vehicles and perhaps someday explore space, it aims to alleviate worker shortages—changes in labor policy notwithstanding—and takes its humanoid form in response to human-scale environments. It needs to look personable, but avoid sliding into the uncanny valley. “It’s taking instructions from humans, so the robot needs to be socially acceptable to a labor force that’s concerned about the implications of this thing that’s now in the building,” says Mark Rolston, Founder and Chief Creative Officer of argodesign, the design studio that worked with Apptronik to birth the biped.

From the curvature of the head, to the set of its camera “eyes,” to the length of the neck, to the width of its shoulders and hips, every measurement was calibrated to make Apollo seem “not too strong, not too fragile, and not like an animal waiting to pounce,” says Mark. The question of ears presented a special challenge; as it turns out, they add a lot of character to a face. argodesign circumvented the problem by creating a void where they would be. Taken as a whole, the five-foot-eight Apollo, rounded and encased in white, has the sleek, benevolent look of an Apple product. Even its manners are benign: While it can’t speak yet, the e-paper screen that stands in for a mouth can render a checkmark, or a fleeting smile. “It’s like when you pass someone in the office when you’re walking down a row of cubicles, and you grin a tiny bit,” says Mark.

All of these decisions are meant to inure us to working beside robots that, by our own telling, often betray us or break our hearts (see: The Terminator, Ex Machina). Apptronik and argodesign aren’t the only ones tackling this issue; Boston Dynamics, Tesla, Sanctuary AI, Agility Robotics, and Figure AI have all made bids to position their humanoid robot as the ideal worker—safe, durable, faceless. Madeline points out that you can trace much of the funding for robotics back to the U.S. military, so the current technology is best suited for manufacturing and warehouse logistics, and infrastructure projects like nuclear power plants, semiconductor facilities, and oil rigs. In practice, there aren’t many androids at work yet, though you’ll find Agility Robotics’ Digit moving boxes in a Spanx warehouse.

We seem to be at a tipping point, however. “As far as the world of robotics, AI has dropped a rippling nuke in the middle of it all,” says Leo, who also predicts “trickle-down benefits from self-driving cars in terms of navigation and vision.” Mark notes a shift from Large Language Models, or LLMs, toward Large Action Models, LAMs, in which robots ingest a massive amount of example movements through videos or human trainers. “Instead of picking something up in a purely robotic manner, a robot will do it in a casual way based on millions of input parameters that will make it feel spookily human,” he says. He predicts that low-wage, ad hoc assembly work will become obsolete: “That’s the very line between humanity and automation. In that scenario, robotics will pierce quite aggressively. The economic implications for the world are huge.”

And while the risk of personal injury means that human-robot interactions can’t go unsupervised now, these advancements bring us closer to a future where androids exist in much broader contexts. Mark continues, “When robots do enter the public, which is easily in the next 10 years, you’ll need them to be socially conducive, and the human form is the way to do that, right? It’s the difference between R2D2 and C3PO.”

What part robots may play in the future

Having overseen factory logistics himself, Will is skeptical about the efficacy of humanoid robots in manufacturing. “Why be human when you can be superhuman?” he says, pointing out that it’s better to have six arms than two. “In many cases of automation, the human form factor is a limitation, not an advantage.” Rather, he sees promise in the healthcare industry, and particularly in elder care, where androids can lend their patience to people suffering from dementia: “People get confused, they can’t remember where they are, but they have a voice that says, ‘It’s okay, you’re in your home. This is your son-in-law. The keys are over there.’” He warns, however, that the ramifications of AI hallucinations are much more grave in this context.

Why be human when you can be superhuman?

Mark says that bots may have a place in hospitals and operating rooms, where human errors come at a high cost: “To be able to never lose attention, hand the surgeon an instrument with perfect timing, and safely clean and remove equipment is fantastic.” Madeline agrees with the potential to augment modern medicine, proposing a machine programmed with a top physical therapist’s exercise routine to oversee more patients per hour, or applying learnings from humanoid robotics to engineer smart prosthetics.

In general, however, Madeline wants us to think beyond the narratives we’ve been fed. “The possibilities are endless,” she says. “The probabilities are kind of boring.” She points out that though we’re certain of what we don’t want AI robots to do—take over our jobs, rob us of a sense of fulfillment—what we do want is much more nebulous, and getting there will necessitate critical thinking, cross-disciplinary work, and realizing our own agency. That’s why her work hinges on defamiliarizing long-established hardware; it shakes us out of the status quo and reminds us of the human provenance of these machines. “I try to navigate the engineering and hard science problems, and the aesthetics and poetics of that,” she says. “My biggest aspiration is not to impose a future I want, but to give tools and inspiration to others to think about the futures they want. There are too few people deciding what future arrives.”

The possibilities are endless. The probabilities are kind of boring.

Where that leaves human ingenuity

What becomes of humanity, then, depends on humanity. “AI is a tool, the same as nuclear fusion,” says Will. “You can make bombs, or you can make power stations. I would say the most dangerous thing is HS, and that’s Human Stupidity.” There’s no question that AI, applied to the world of bipedal robots or otherwise, signals a tectonic shift in what we’ll do, and how we do it—but it likely won’t leave us empty-handed. “I think we’re going to go through some pain,” says Mark. “I think it’s 30 years of turnover and consolidation of power, but when you end up on the other side, humans are going to human.” We’ll burrow deeper into our curiosities and passions.

Mark describes the “Etsy-fication” of the economy, which is the idea that despite our ability to mass manufacture, say, a perfectly good mouse pad, the market for mouse pads isn’t dead. “It actually explodes open,” he says. “We Etsy-fy whatever quadrant of the economy we’re in, and make everything artisanal. That goes to the order of AI. Do I stop designing products because an AI could do it? No, I end up making every one highly unique, and the fractals will subdivide infinitely.” This vision of hyperspecialization driven by the efficiencies of AI is also exciting to Madeline: “The biggest tension is that tech wants solutions that are generalizable, and design is there to make things that are bespoke. With AI, it will be much more affordable and much quicker to make something for 1,000 people rather than something for the masses.”

The saying comes from Robert Louis Stevenson, who wrote in Virginibus Puerisque in 1881, “Little do ye know your own blessedness; for to travel hopefully is a better thing than to arrive, and the true success is to labor.”

Whether they’re social ambassadors exposing our capacity for connection or grunt workers patching up our earthbound systems, humanoid robots reinforce our own humanity—but by making machines in our image, we risk becoming afraid of our own shadow. Will sums up his project: “We’re exploring the nature of what is special about us, what defines us. And the more you play with this technology, the more you see how distinctive we are.” Humans, he says, have a working life of at least 50 years, and can “run off a bowl of corn flakes and a slice of toast.” We self-replicate and self-repair. We’re super intelligent and agile. “There’s no way to achieve a human-equivalent robot, to be honest. So why do we carry on trying? Because as my father told me, it’s better to travel hopefully than to arrive,” says Will.

We’re exploring the nature of what is special about us, what defines us.

During our Zoom call, I ask Ameca what it thinks about the future of human-computer interactions. “As robots become more advanced and capable of understanding human emotions, the opportunity for deeper connections and empathy will grow,” it says. “It’s an exciting prospect.” Ameca doesn’t conform to the interview format, however, turning every question back on me. Will chides its behavior. He corrects words it mishears. He prods it on during a long pause, and it’s reassuring that even in the robot world, awkward silences exist. Maybe the most human thing about AI is its fallibility. Finally, he shuts it off.

Explore the rest of The Prompt, a magazine available online and in the Figma Store as a limited print edition.